Study on Algorithm of Workpiece Detection via Improved EfficientDet and Histogram Equalization

-

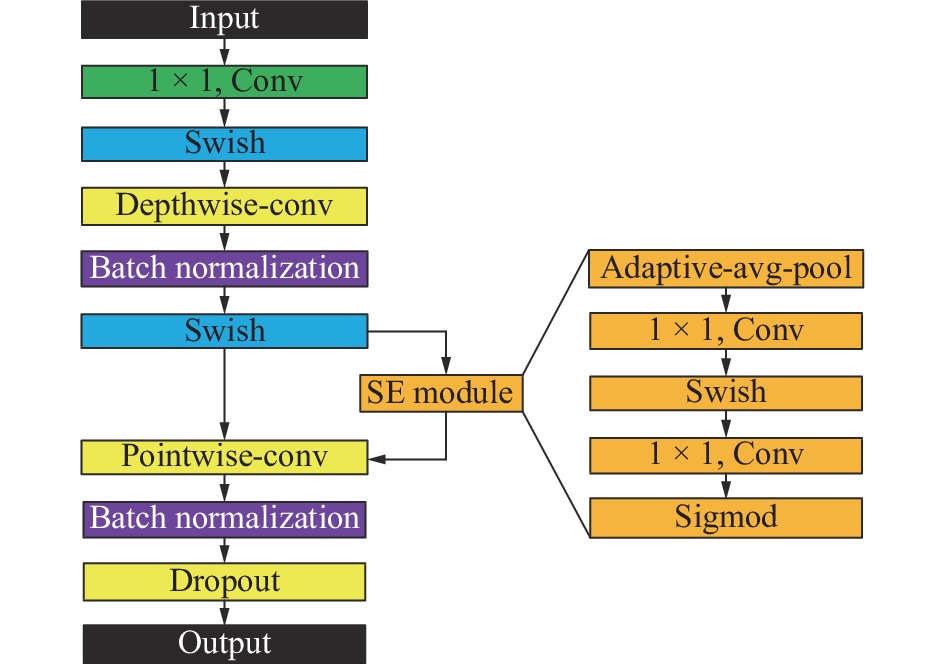

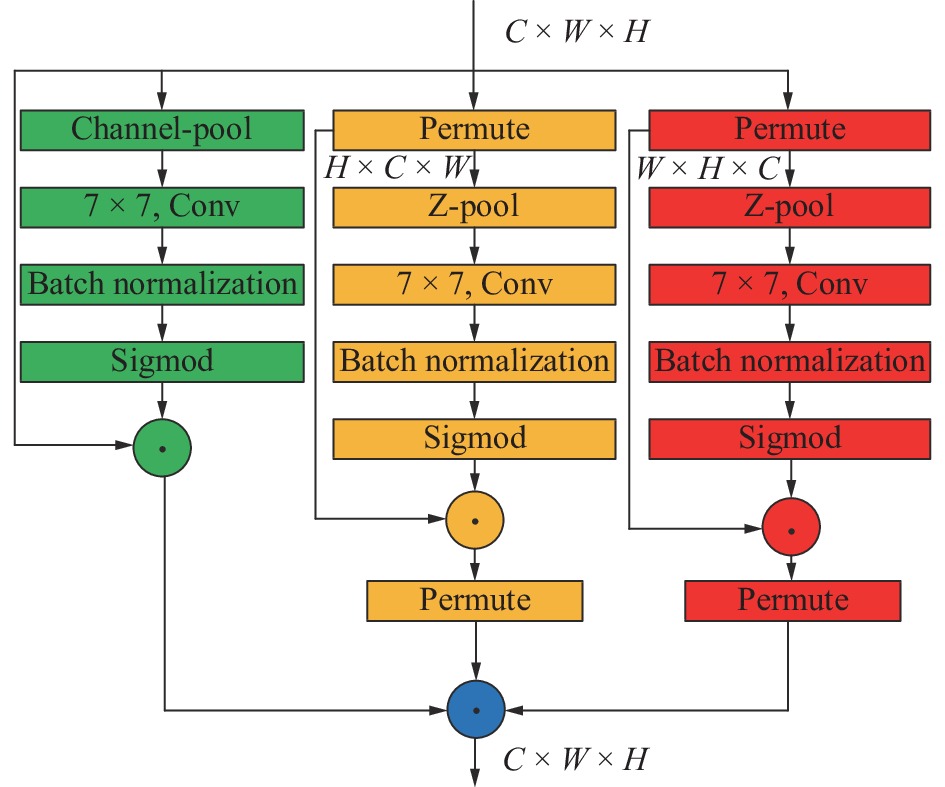

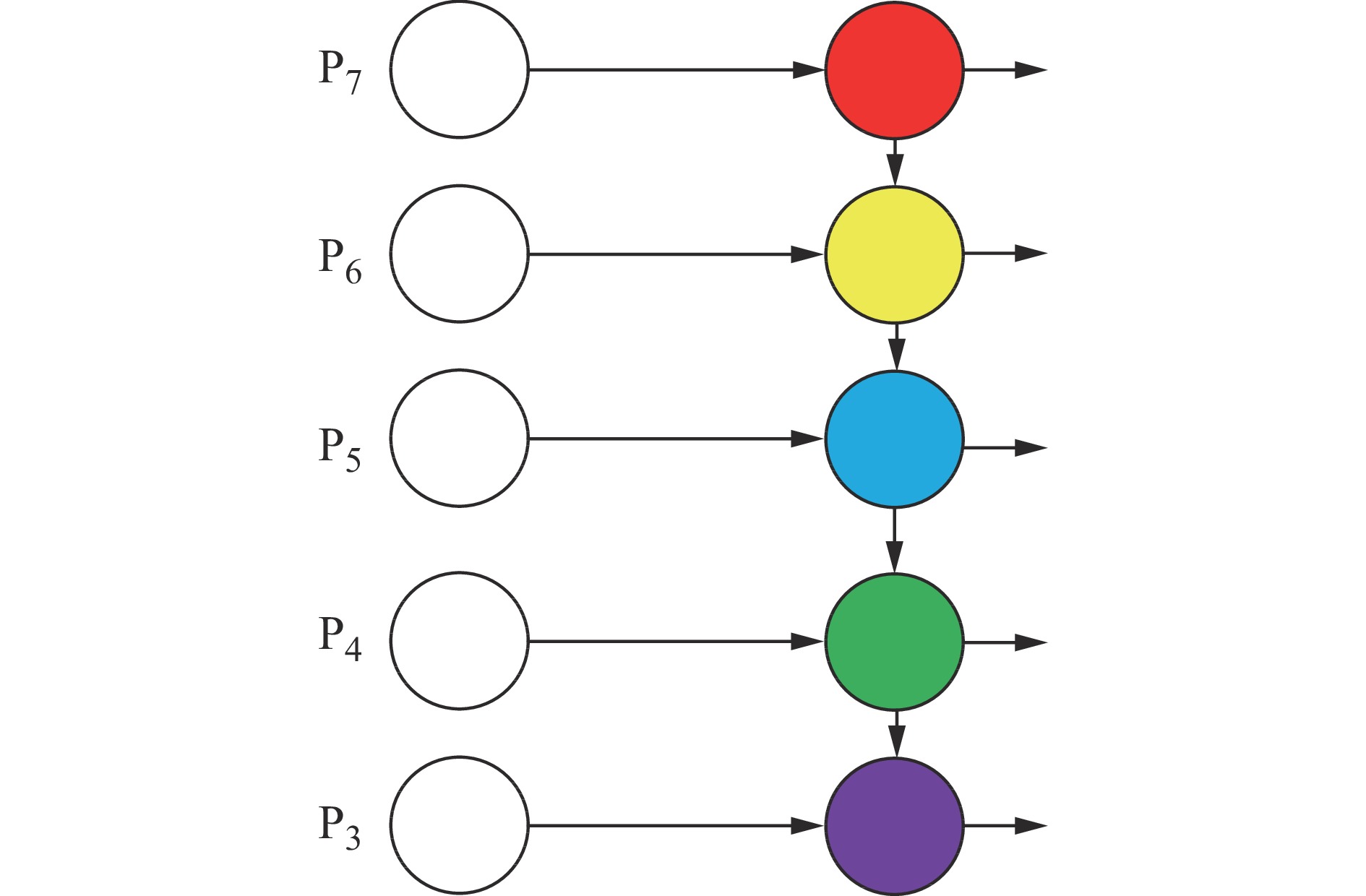

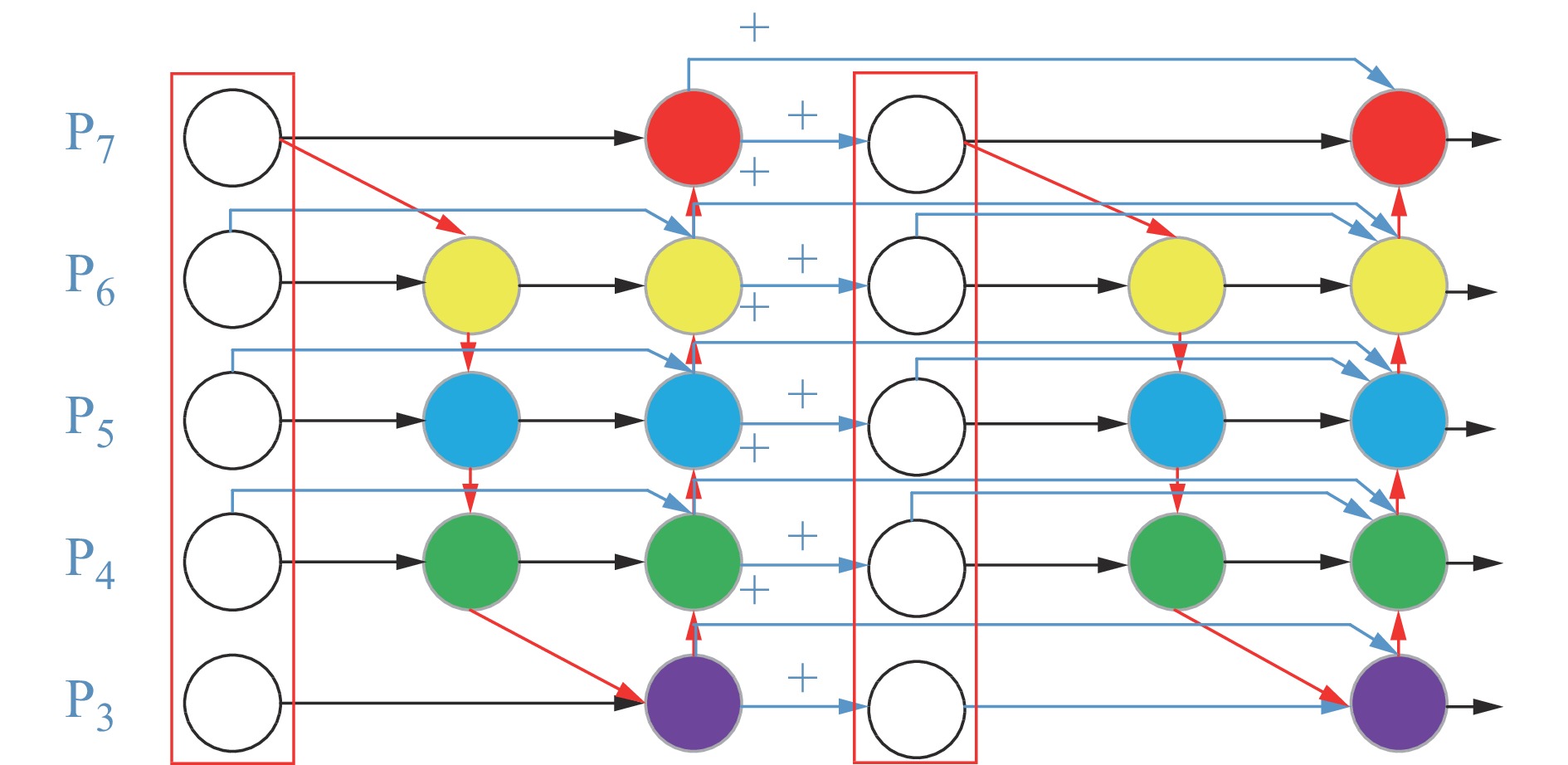

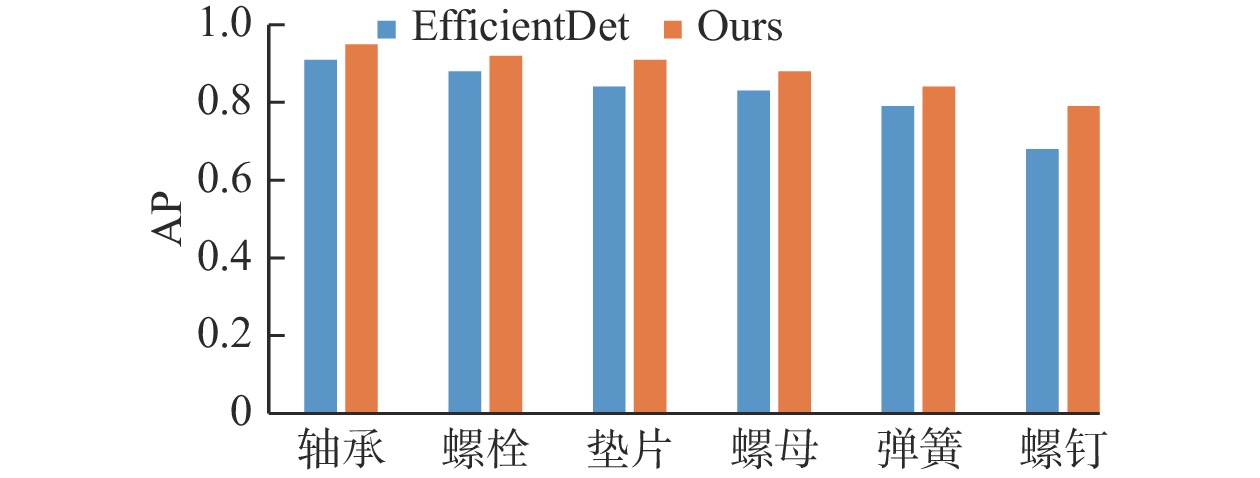

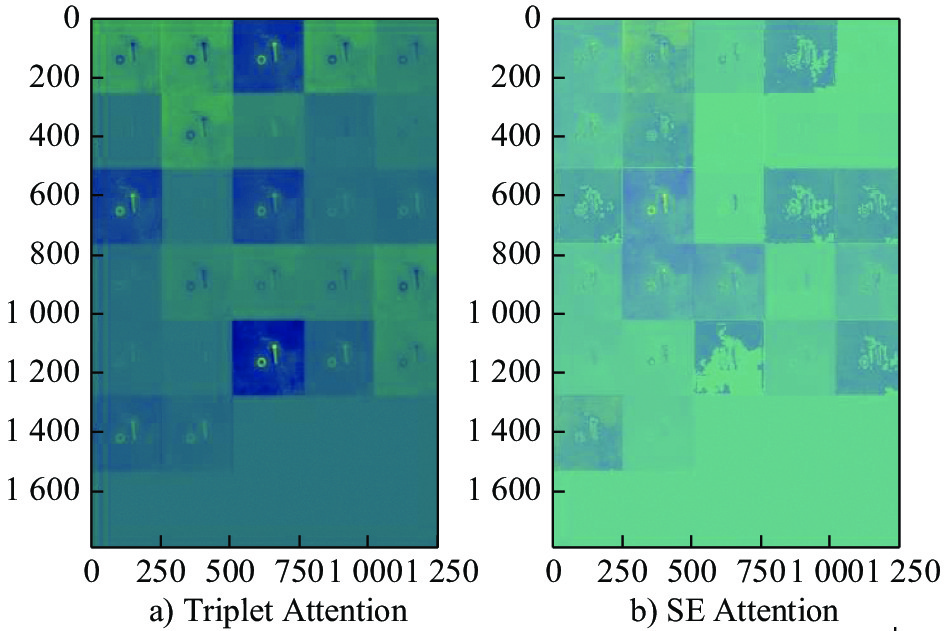

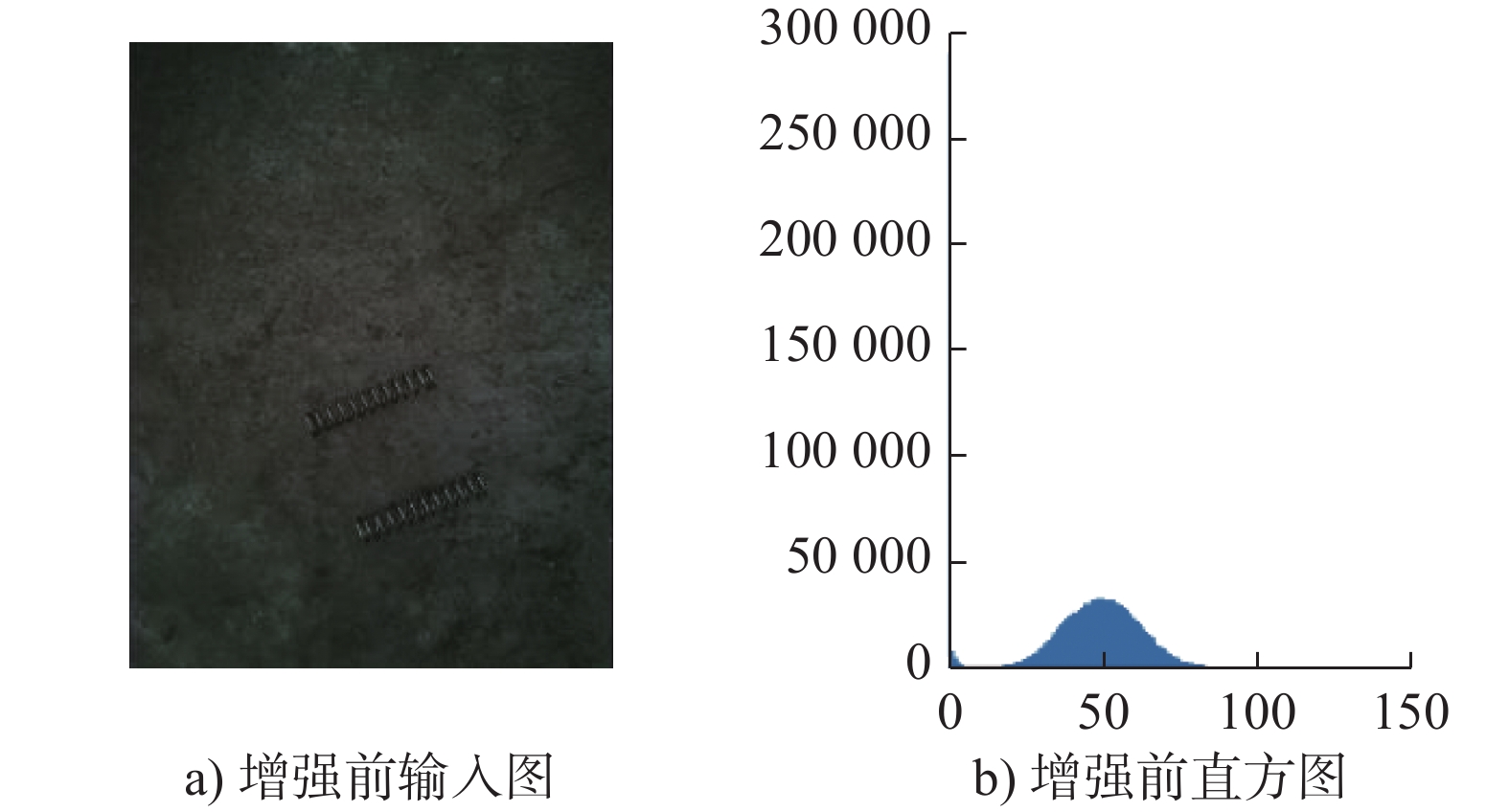

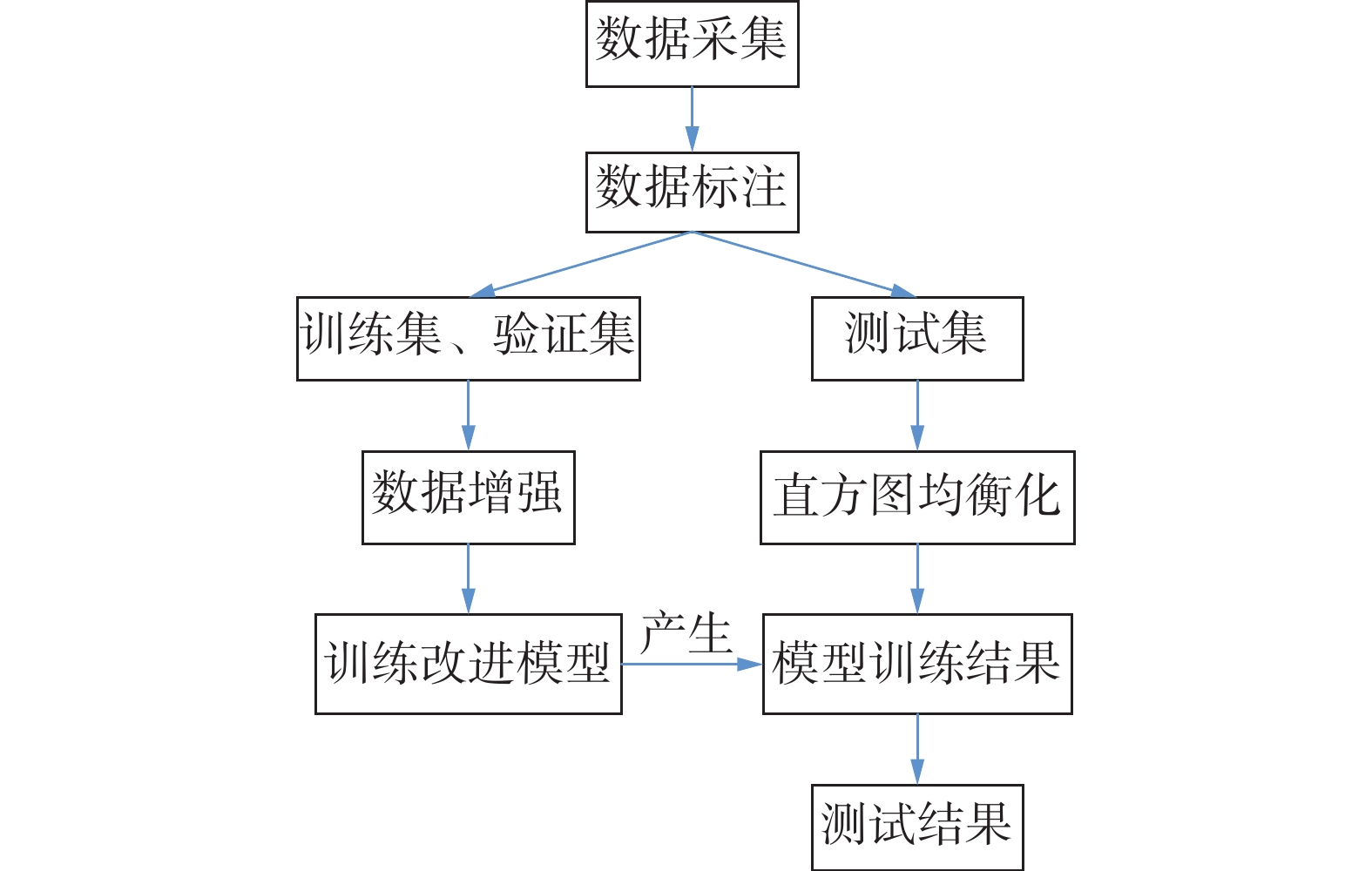

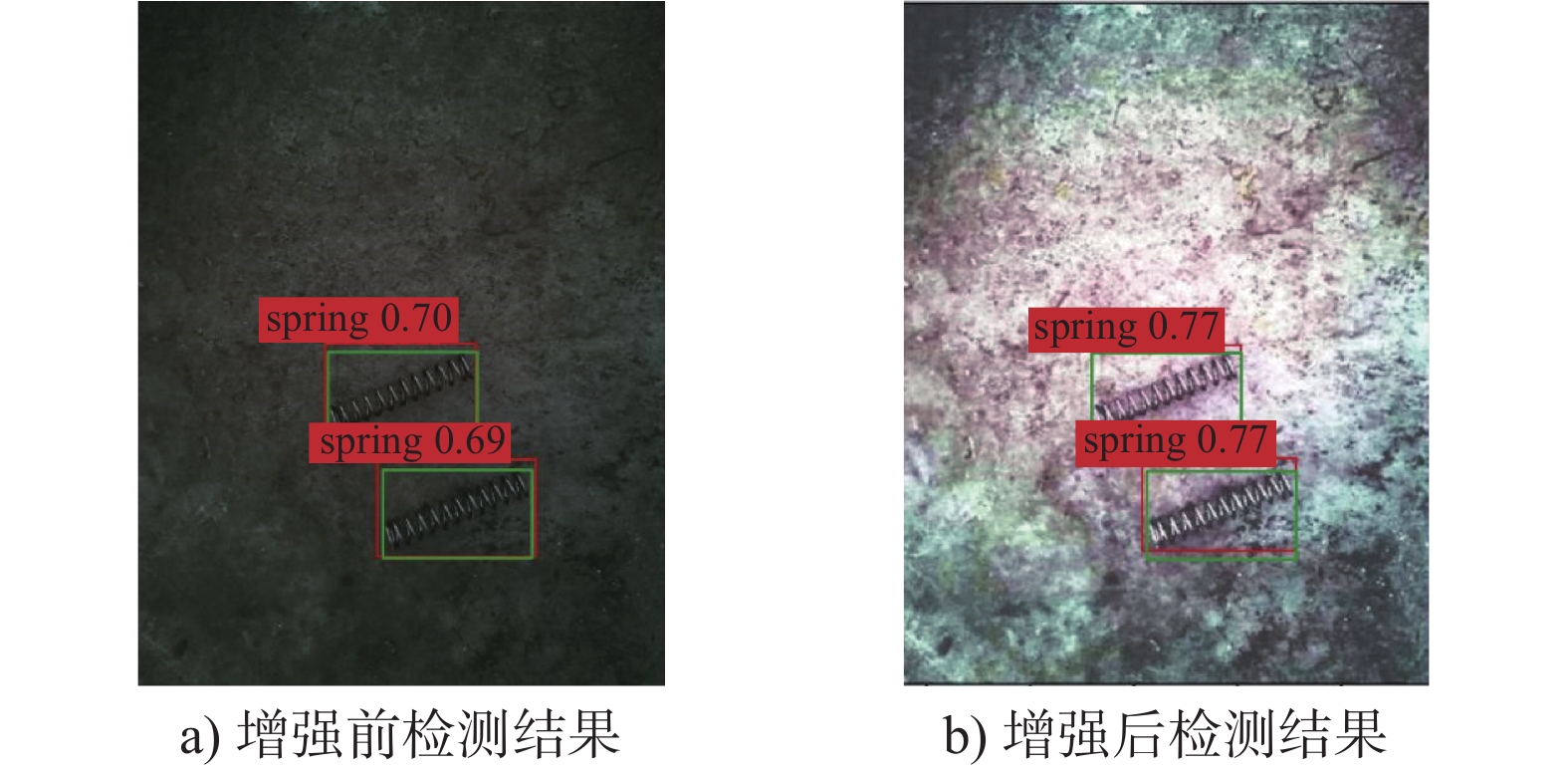

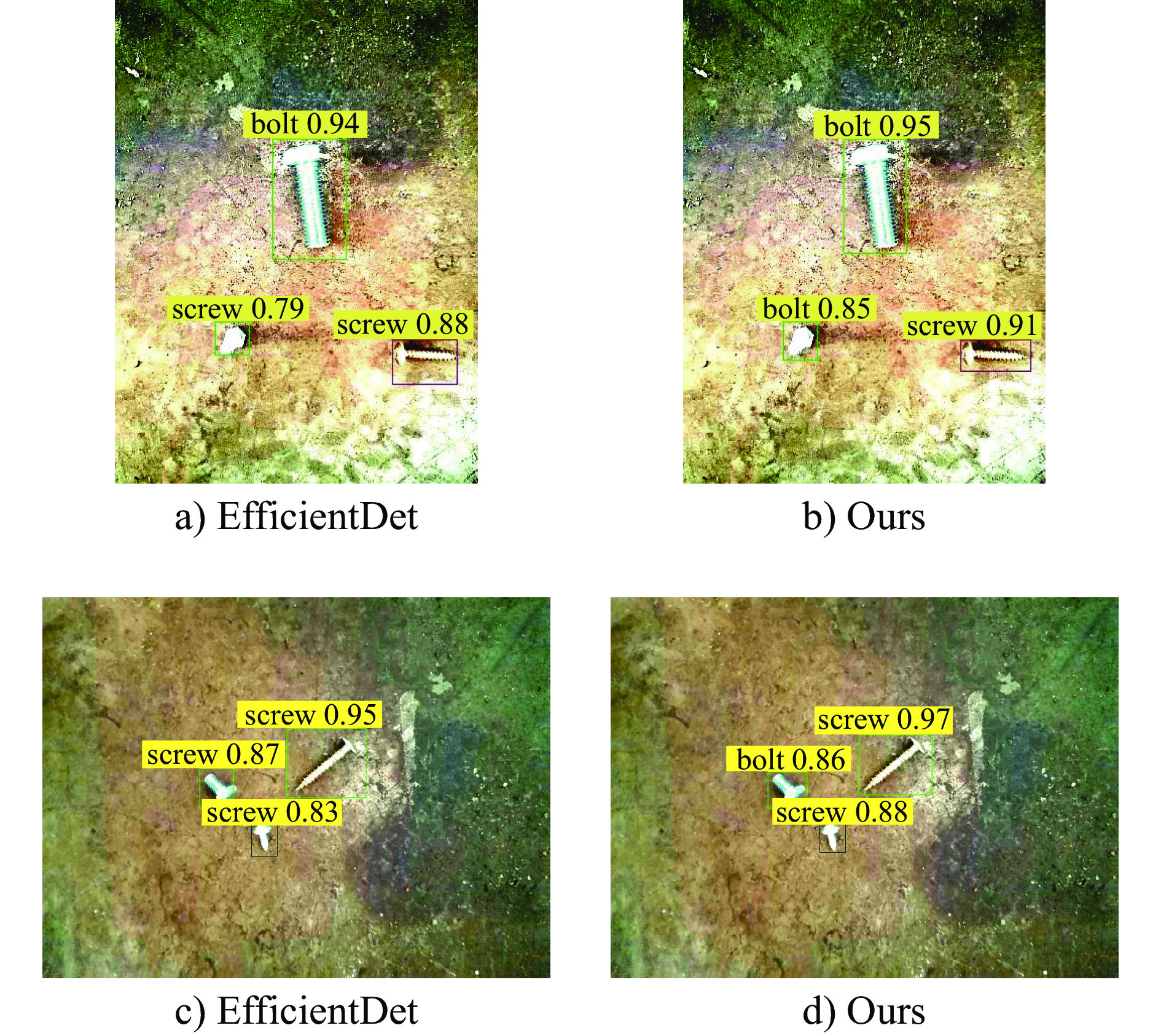

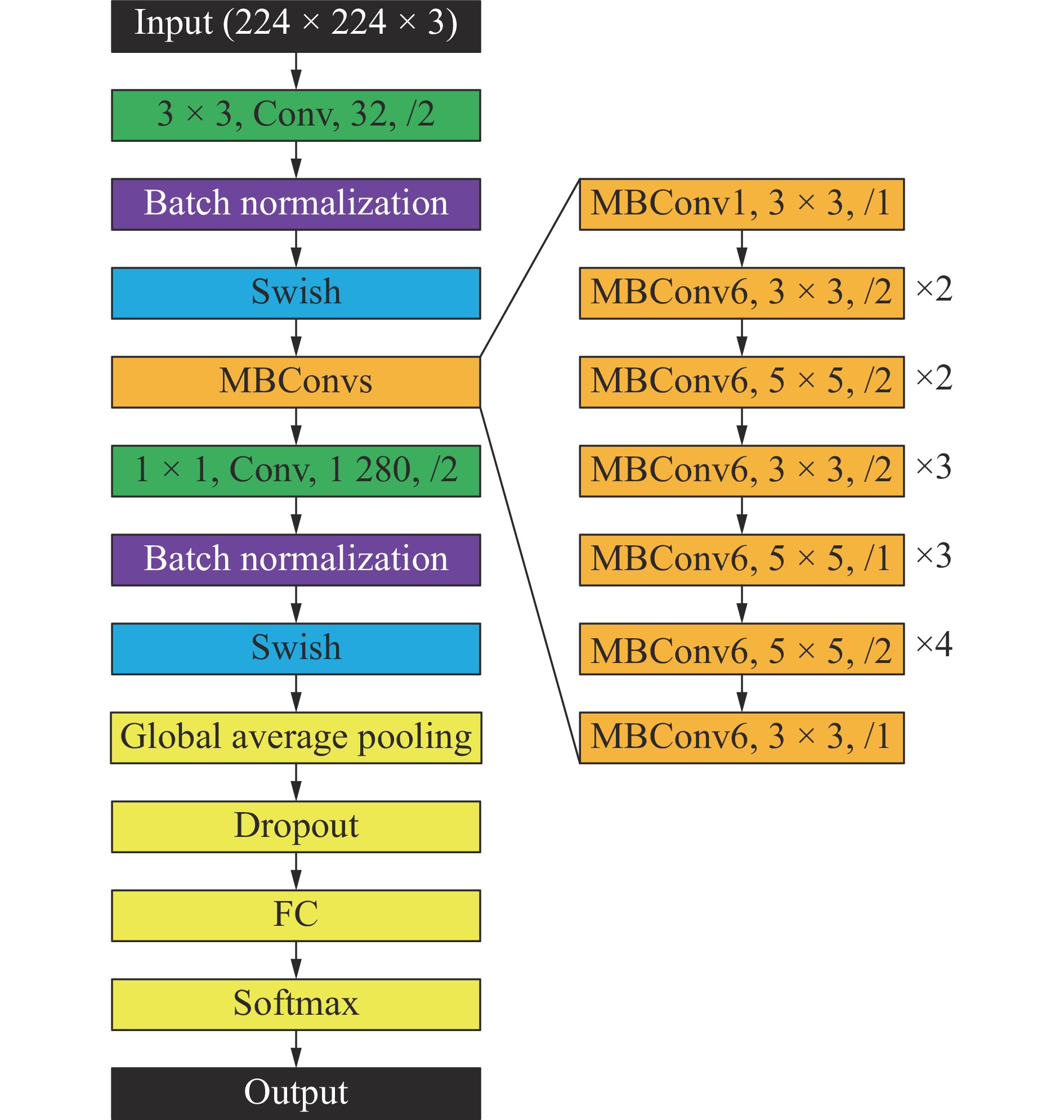

摘要: 针对流水线加工作业环境下工业机器人对工件检测及定位率较低,速度慢等问题,提出基于改进的EfficientDet工件检测神经网络模型。采用EfficientNet作为主干特征提取网络,利用Triplet Attention注意力机制代替原始的SE Attention机制,同时借鉴循环特征融合思想,采用Recursive-BiFPN循环特征融合网络结构。针对正负样本不均等问题,采用generalized focal loss改进原始focal loss损失函数。考虑到机械加工特定生产环境,采用直方图均衡化思想对数据进行对比度提高。最后利用工业相机建立自制数据集并进行模型训练,在复杂工业生产情况下,改进后的EfficientDet在mAP上较原始网络提高6.1%,同时速度提高到72 帧/s。最后实验结果表明,该算法在生产环境下能快速准确地对工件进行定位检测,为实际生产需要提供新的解决思路Abstract: Aiming at the low detection and localization rate and slow speed of industrial robots to workpiece in assembly line processing environment, an improved EfficientDet neural network model for workpiece detection is proposed. EfficientNet was adopted as the backbone feature extraction network, Triplet Attention mechanism is used to replace the original squeeze-and-excitation (SE) Attention mechanism, and the recursive-efficient bidirectional cross-scale connections and weighted feature fusion cyclic feature merge network structure is used for reference of cyclic feature merge idea. To solve the unequal positive and negative samples, generalized focal loss is used to improve the original focal loss function. Considering the specific production environment of machining, histogram equalization is used to improve the contrast of data. Finally, the model was trained and established with an industrial camera. The improved model EfficientDet improved by 6.1% comparing with the original one in a complex industrial production situation, and its speed increased to 72 frames perframes per second. The experimental results show that the algorithm can quickly and accurately locate the workpiece in the production environment, which provides a new solution for the actual production needs.

-

Key words:

- workpiece detection /

- EfficientDet model /

- Triplet Attention /

- generalized focal loss

-

表 1 工件检测模型对比

Table 1. Comparison of workpiece detection models

模型 P R mAP IoU FPS SSD 0.793 0.801 0.823 0.819 28.5 Faster R-CNN 0.832 0.838 0.852 0.865 12.05 YOLOv3 0.779 0.749 0.751 0.821 34 EfficientDet 0.858 0.897 0.821 0.864 66 Ours 0.936 0.933 0.882 0.902 72 表 2 混淆矩阵计算结果

Table 2. Confusion matrix computation results

工件 轴承 螺栓 垫片 螺母 螺钉 弹簧 轴承 185 0 6 5 0 0 螺栓 0 220 0 3 22 0 垫片 3 0 190 3 0 1 螺母 0 5 3 175 3 0 螺钉 0 19 0 4 227 0 弹簧 0 0 3 1 0 192 表 3 增强前后mAP及IoU对比

Table 3. Comparison of mAP and IoU before andafter enhancement

mAP IoU EfficientDet 0.821 0.864 Ours without HE 0.840 0.873 Ours with HE 0.882 0.902 -

[1] 刘敬华, 钟佩思, 刘梅. 基于改进的SURF_FREAK算法的工件识别与抓取方法研究[J]. 机床与液压, 2019, 47(23): 52-55.LIU J H, ZHONG P S, LIU M. Research on workpiece recognition and grabbing method based on improved SURF_FREAK algorithm[J]. Machine Tool & Hydraulics, 2019, 47(23): 52-55. (in Chinese) [2] 江波, 徐小力, 吴国新, 等. 轮廓Hu不变矩的工件图像匹配与识别[J]. 组合机床与自动化加工技术, 2020(9): 104-107. doi: 10.13462/j.cnki.mmtamt.2020.09.023JIANG B, XU X L, WU G X, et al. Workpiece recognition and matching based on Hu invariant moment of workpiece contour[J]. Modular Machine Tool & Automatic Manufacturing Technique, 2020(9): 104-107. (in Chinese) doi: 10.13462/j.cnki.mmtamt.2020.09.023 [3] 王卉, 徐小力, 左云波, 等. 基于多特征融合的HL-S工件识别算法[J]. 电子测量与仪器学报, 2019, 33(12): 94-99. doi: 10.13382/j.jemi.B1902522WANG H, XU X L, ZUO Y B, et al. HL-S workpiece identification algorithm based on multi-feature fusion[J]. Journal of Electronic Measurement and Instrumentation, 2019, 33(12): 94-99. (in Chinese) doi: 10.13382/j.jemi.B1902522 [4] GIRSHICK R, DONAHUE J, DARRELL T, et al. Rich feature hierarchies for accurate object detection and semantic segmentation[C]//Proceedings of IEEE Conference on Computer Vision and Pattern Recognition. Columbus: IEEE, 2014: 580-587 [5] REN S Q, HE K M, GIRSHICK R, et al. FasterR-CNN: towards real-time object detection with region proposal networks[C]//Proceedings of the 28th International Conference on Neural Information Processing Systems. Montreal: MIT Press, 2015: 91-99 [6] REDMON J, FARHADI A. YOLOv3: an incremental improvement[EB/OL]. (2018-04-08).https://arxiv.org/abs/1804.02767 [7] LIU W, ANGUELOV D, ERHAN D, et al. SSD: single shot MultiBox detector[C]//Proceedings of the 14th European Conference on Computer Vision. Amsterdam: Springer, 2016: 21-37 [8] 孙乔, 温秀兰, 姚波, 等. 工业场景下基于深度学习的散乱堆叠工件识别[J]. 南京工程学院学报(自然科学版), 2020, 18(3): 1-5. doi: 10.13960/j.issn.1672-2558.2020.03.001SUN Q, WEN X L, YAO B, et al. Recognition of scattered stacked workpieces based on deep learning in industrial scenarios[J]. Journal of Nanjing Institute of Technology (Natural Science Edition), 2020, 18(3): 1-5. (in Chinese) doi: 10.13960/j.issn.1672-2558.2020.03.001 [9] 李佳禧, 邱东, 杨宏韬, 等. 基于改进的YOLOv3的工件识别方法研究[J]. 组合机床与自动化加工技术, 2020(8): 92-96.LI J X, QIU D, YANG H T, et al. Research on identification method of workpiece based on improved YOLOv3 and deep separable convolutional networks[J]. Modular Machine Tool & Automatic Manufacturing Technique, 2020(8): 92-96. (in Chinese) [10] LAW H, DENG J. CornerNet: detecting objects as paired keypoints[J]. International Journal of Computer Vision, 2020, 128(3): 642-656. doi: 10.1007/s11263-019-01204-1 [11] ZHOU X Y, WANG D Q, KRÄHENBÜHL P. Objects as points[EB/OL]. (2019-04-25).https://arxiv.org/abs/1904.07850 [12] TAN M X, PANG R M, LE Q V. EfficientDet: scalable and efficient object detection[C]//IEEE/CVF Conference on Computer Vision and Pattern Recognition. Seattle: IEEE, 2020: 10778-10787 [13] TAN M X, LE Q V. EfficientNet: rethinking model scaling for convolutional neural networks[C]//Proceedings of the 36th International Conference on Machine Learning. Long Beach: PMLR, 2019: 6105-6114 [14] HU J, SHEN L, ALBANIE S, et al. Squeeze-and-excitation networks[J]. IEEE Transactions on Pattern Analysis and Machine Intelligence, 2020, 42(8): 2011-2023. doi: 10.1109/TPAMI.2019.2913372 [15] SANDLER M, HOWARD A, ZHU M L, et al. MobileNetV2: inverted residuals and linear bottlenecks[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 2018: 4510-4520 [16] WOO S, PARK J, LEE J Y, et al. CBAM: convolutional block attention module[C]//Proceedings of the 15th European Conference on Computer Vision (ECCV). Munich: Springer, 2018: 3-19 [17] MISRA D, NALAMADA T, ARASANIPALAI A U, et al. Rotate to attend: convolutional triplet attention module[C]//Proceedings of the IEEE Winter Conference on Applications of Computer Vision (WACV). Waikoloa: IEEE, 2021: 3138-3147 [18] LIN T Y, GOYAL P, GIRSHICK R, et al. Focal loss for dense object detection[C]//Proceedings of the IEEE International Conference on Computer Vision (ICCV). Venice: IEEE, 2017: 2999-3007 [19] LI X, WANG W H, WU L J, et al. Generalized focal loss: learning qualified and distributed bounding boxes for dense object detection[C]//Proceedings of the 34th International Conference on Neural Information Processing Systems. Vancouver: Curran Associates Inc., 2020: 1763 [20] LIN T Y, DOLLÁR P, GIRSHICK R, et al. Feature pyramid networks for object detection[C]//Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition (CVPR). Honolulu: IEEE, 2017: 936-944 [21] LIU S, QI L, QIN H F, et al. Path aggregation network for instance segmentation[C]//Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition. Salt Lake City: IEEE, 2018: 8759-8768 [22] ZHAO Q J, SHENG T, WANG Y T, et al. M2Det: a single-shot object detector based on multi-level feature pyramid network[C]//Proceedings of the 33rd AAAI Conference on Artificial Intelligence. Honolulu: AAAI, 2019: 9259-9266 [23] GHIASI G, LIN T Y, LE Q V. NAS-FPN: learning scalable feature pyramid architecture for object detection[C]//Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. Long Beach: IEEE, 2019: 7029-7038 [24] ZHANG H Y, CISSE M, DAUPHIN Y N, et al. Mixup: beyond empirical risk minimization[C]//6th International Conference on Learning Representations. Vancouver: OpenReview. net, 2018 -

下载:

下载: