Exploring Target Recognition and Grasping Technology and Developing Vision Manipulator System

-

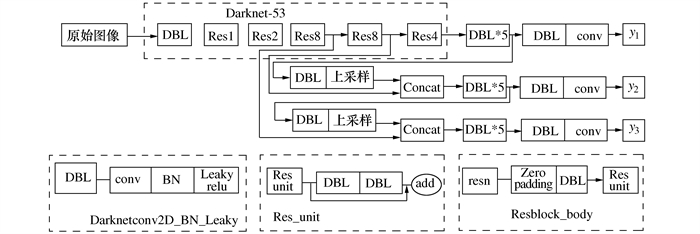

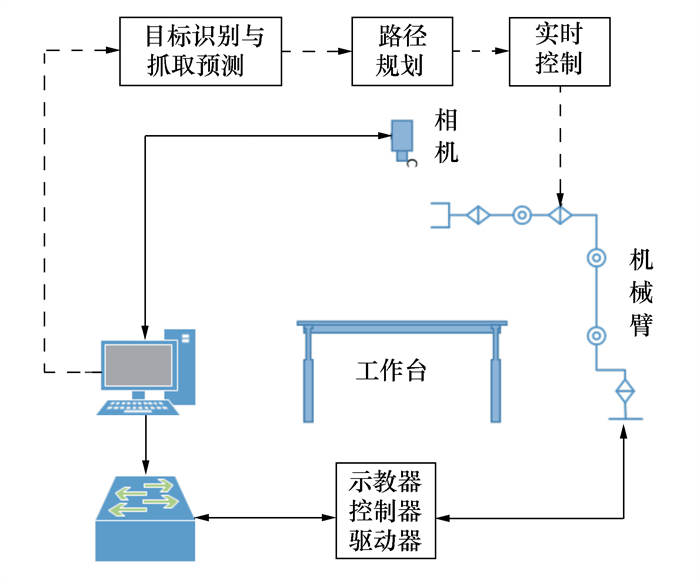

摘要: 为了实现机械臂的目标自动识别与抓取, 基于深度学习检测算法DarkNet-53开展了研究工作, 搭建了结合视觉机械臂的目标抓取实验平台。在深度学习框架下进行特征提取, 采用YOLOv3完成了目标快速分类检测。采用基于DarkNet-53的五参数法完成了目标位姿的检测, 并在物理样机上进行实验测试。研究结果表明, 通过深度学习算法可以实现对目标物体的快速分类识别和抓取区域分析, 实现自动识别与抓取。Abstract: In order to realize the automatic target recognition and grasping of the vision manipulator, research work is carried out based on the deep learning detection algorithm DarkNet-53, and a target grasping experimental platform combined with the vision manipulator is established. Feature extraction is performed under the deep learning framework. Adopt YOLOv3 to complete the rapid target classification detection. The five-parameter method based on the DarkNet-53 is used to complete the prediction of the target pose. The experiments on the physical prototype are carried out. The research results show that the deep learning algorithm can achieve the rapid classification and recognition of a target and the analysis of a grasping area and can realize automatic recognition and grasping.

-

Key words:

- deep learning /

- target recognition /

- pose prediction /

- grasping

-

表 1 预测结果与实际最佳抓取位置和方向的偏差

抓取物体 位置偏差/mm 方向偏差/(°) 环形线缆 0.64 -3 快递盒 1.25 2 收纳盒 1.34 5 螺丝刀 0.48 4 小工件 1.12 -2 螺丝 1.11 3 剪刀 1.20 5 胶带 1.16 -5 牙刷 0.54 3 -

[1] KRIZHEVSKY A, SUTSKEVER I, HINTON G E. ImageNet classification with deep convolutional neural networks[C]//Proceedings of the 25th International Conference on Neural Information Processing Systems. Lake Tahoe, Nevada, USA: Curran Associates Inc., 2012: 1097-1105 [2] SIMONYAN K, ZISSERMAN A. Very deep convolutional networks for large-scale image recognition[J]. arXiv: 1409.1556, 2014 [3] LIU W, ANGUELOV D, ERHAN D, et al. SSD: single shot MultiBox detector[C]//Proceedings of the 14th European Conference on Computer Vision. Amsterdam, The Netherlands: Springer, 2016: 21-37 [4] REDMON J, DIVVALA S, GIRSHICK R, et al. You only look once: unified, real-time object detection[C]//Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition. Las Vegas, NV, USA: IEEE, 2016: 779-788 [5] REDMON J, FARHADI A. YOLOv3: an incremental improvement[J]. International Journal of Computer Vision, 2015, 111(1): 98-136 doi: 10.1007/s11263-014-0733-5 [6] MAHLER J, LIANG J, NIYAZ S, et al. Dex-Net 2.0: deep learning to plan robust grasps with synthetic point clouds and analytic grasp metrics[J]. Science and Systems, 2017, 42(30): 81-90 [7] MILLER A T, ALLEN P K. Graspit! A versatile simulator for robotic grasping[J]. IEEE Robotics & Automation Magazine, 2004, 11(4): 110-122 [8] MERCIER J P, MITASH C, GIGUÈRE P, et al. Learning object localization and 6D pose estimation from simulation and weakly labeled real images[C]//Proceedings of 2019 International Conference on Robotics and Automation. Montreal, QC, Canada: IEEE, 2019 [9] HU F J, XU R N, VELA P A. Real-world multiobject, multigrasp detection[J]. IEEE Robotics and Automation Letters, 2018, 3(4): 3355-3362 doi: 10.1109/LRA.2018.2852777 [10] LENZ I, LEE H, SAXENA A. Deep learning for detecting robotic grasps[J]. The International Journal of Robotics Research, 2015, 34(4-5): 705-724 doi: 10.1177/0278364914549607 [11] 蔡自兴. 机器人学基础[M]. 北京: 机械工业出版社, 2009CAI Z X. Fundamentals of robotics[M]. Beijing: China Machine Press, 2009 (in Chinese) [12] CORKE P. Robotics, vision and control: fundamental algorithms in MATLAB[M]. Berlin: Springer, 2011 [13] 刘松国, 朱世强, 李江波, 等. 6R机器人实时逆运动学算法研究[J]. 控制理论与应用, 2008, 25(6): 1037-1041 https://www.cnki.com.cn/Article/CJFDTOTAL-KZLY200806011.htmLIU S G, ZHU S Q, LI J B, et al. Research on real-time inverse kinematics algorithms for 6R robots[J]. Control Theory & Applications, 2008, 25(6): 1037-1041 (in Chinese) https://www.cnki.com.cn/Article/CJFDTOTAL-KZLY200806011.htm -

下载:

下载: